- Page History

- Login to edit

Bonding, also called port trunking or link aggregation means combining several network interfaces (NICs) to a single link, providing either high-availability, load-balancing, maximum throughput, or a combination of these. See Wikipedia for details.

Step 1: Ensure kernel support

Before Ubuntu can configure your network cards into a NIC bond, you need to ensure that the correct kernel module bonding is present, and loaded at boot time.

Edit your /etc/modules configuration:

Ensure that the bonding module is loaded:

Note: Starting with Ubuntu 9.04, this step is optional if you are configuring bonding with ifup/ifdown. In this case, the bonding module is automatically loaded when the bond interface is brought up.

Step 2: Configure network interfaces

Ensure that your network is brought down:

Then load the bonding kernel module:

Now you are ready to configure your NICs.

A general guideline is to:

- Pick which available NICs will be part of the bond.

- Configure all other NICs as usual

- Configure all bonded NICs:

- To be manually configured

- To join the named bond-master

- Configure the bond NIC as if it were a normal NIC

- Add bonding-specific parameters to the bond NIC as follows.

Edit your interfaces configuration:

For example, to combine eth0 and eth1 as slaves to the bonding interface bond0 using a simple active-backup setup, with eth0 being the primary interface:

The bond-primary directive, if needed, needs to be part of the slave description (eth0 in the example), instead of the master. Otherwise it will be ignored.

As another example, to combine eth0 and eth1 using the IEEE 802.3ad LACP bonding protocol:

The bond statements in 12.04 are dashed instead of underscored. The config above is updated based on Stéphane Graber’s bonding example at :

The configuration as provided above works with Ubuntu 12.10 out of the box — michel-drescher, 10 Nov 2012

For bonding-specific networking options please consult the documentation available at BondingModuleDocumentation.

Finally, bring up your network again:

Link information is available under /proc/net/bonding/. To check bond0 for example:

To bring the bonding interface, run

To bring down a bonding interface, run

Ethernet bonding has different modes you can use. You specify the mode for your bonding interface in /etc/network/interfaces. For example:

Descriptions of bonding modes

Round-robin policy: Transmit packets in sequential order from the first available slave through the last. This mode provides load balancing and fault tolerance. Mode 1

Active-backup policy: Only one slave in the bond is active. A different slave becomes active if, and only if, the active slave fails. The bond’s MAC address is externally visible on only one port (network adapter) to avoid confusing the switch. This mode provides fault tolerance. The primary option affects the behavior of this mode. Mode 2

XOR policy: Transmit based on selectable hashing algorithm. The default policy is a simple source+destination MAC address algorithm. Alternate transmit policies may be selected via the xmit_hash_policy option, described below. This mode provides load balancing and fault tolerance. Mode 3

Broadcast policy: transmits everything on all slave interfaces. This mode provides fault tolerance. Mode 4

IEEE 802.3ad Dynamic link aggregation. Creates aggregation groups that share the same speed and duplex settings. Utilizes all slaves in the active aggregator according to the 802.3ad specification.

Prerequisites:

- Ethtool support in the base drivers for retrieving the speed and duplex of each slave.

- A switch that supports IEEE 802.3ad Dynamic link aggregation. Most switches will require some type of configuration to enable 802.3ad mode.

Mode 5

Adaptive transmit load balancing: channel bonding that does not require any special switch support. The outgoing traffic is distributed according to the current load (computed relative to the speed) on each slave. Incoming traffic is received by the current slave. If the receiving slave fails, another slave takes over the MAC address of the failed receiving slave.

Prerequisites:

- Ethtool support in the base drivers for retrieving the speed of each slave.

Mode 6

Adaptive load balancing: includes balance-tlb plus receive load balancing (rlb) for IPV4 traffic, and does not require any special switch support. The receive load balancing is achieved by ARP negotiation. The bonding driver intercepts the ARP Replies sent by the local system on their way out and overwrites the source hardware address with the unique hardware address of one of the slaves in the bond such that different peers use different hardware addresses for the server.

Descriptions of balancing algorithm modes

The balancing algorithm is set with the xmit_hash_policy option.

Possible values are:

layer2 Uses XOR of hardware MAC addresses to generate the hash. This algorithm will place all traffic to a particular network peer on the same slave.

layer2+3 Uses XOR of hardware MAC addresses and IP addresses to generate the hash. This algorithm will place all traffic to a particular network peer on the same slave.

layer3+4 This policy uses upper layer protocol information, when available, to generate the hash. This allows for traffic to a particular network peer to span multiple slaves, although a single connection will not span multiple slaves.

encap2+3 This policy uses the same formula as layer2+3 but it relies on skb_flow_dissect to obtain the header fields which might result in the use of inner headers if an encapsulation protocol is used.

encap3+4 This policy uses the same formula as layer3+4 but it relies on skb_flow_dissect to obtain the header fields which might result in the use of inner headers if an encapsulation protocol is used.

The default value is layer2. This option was added in bonding version 2.6.3. In earlier versions of bonding, this parameter does not exist, and the layer2 policy is the only policy. The layer2+3 value was added for bonding version 3.2.2.

UbuntuBonding (последним исправлял пользователь sbright 2017-11-07 14:58:45)

Introduction

In this article, we’ll look at how to set up LACP bonding on a server running Ubuntu. LACP bonding uses the Link Aggregation Control Protocol to combine two network interfaces into one logical interface. Today, we’ll use it to combine two ethernet interfaces. This is useful to increase the throughput from each ethernet device and to provide for a way to failover if there’s an error with one of the devices.

Prerequisites

- The network switch your server is connected to must be set up accordingly in for a successful procedure

- You need to have the SSH login details of your server ready

Step 1 – Login using SSH

You must be logged in via SSH as sudo or root user. Please see this article for instructions if you don’t know how to connect.

Step 2 – Install the ifenslave dependency

Step 3: Load bonding kernel module

Before you can configure the network cards you need to ensure that the kernel module called bonding is present and loaded.

If the module is not loaded. Use the following command to load it

To ensure that the bonding module is loaded during boot time change file the following file

Add the following line

Step 4 – find the active network interface

Step 5 – Configure the network interface

The output of step 4 is the network interface which is active at the moment. You should use that name for the bond. In our case this is enp2s0 and the second interface enp3s0.

Apply the changes

Step 6 – Reboot

Step 7 – Check bonding interface status

If everything went well, you should have a working bonding interface. You can check this by executing the following command

Conclusion

Congratulations, you have configured LACP network interface according to IEEE 802.3ad on a Ubuntu server 18.04 with Netplan. If you are interested in other modes which are available check this URL section “Descriptions of bonding modes”.

Comments

Could you please let me know which file do I need to edit in Step 5?

Also where in the file (step5) are the two physical interfaces defined?

You should be able to find the Netplan yaml configuration file in this folder “/etc/netplan/”. Yes, there are two physical interfaces required for creating a bond.

Michael Cooper says

Very nice article I understand the over all concept now just need one thing addressed.

Where are the nics defined in the netplan config file?

You have the following:

################

network:

version: 2

ethernets:

eports:

match:

name: enp*

optional: true

bonds:

bond0:

interfaces: [eports]

addresses: [78.41.207.45/24]

gateway4: 78.41.207.1

nameservers:

addresses: [89.207.128.252, 89.207.130.252]

parameters:

mode: 802.3ad

lacp-rate: fast

mii-monitor-interval: 100

############################

I am assuming enp2s0 goes under network -> ethernets->eports->name does it actually use a wildcard character?

Where does enp3s0 get defined in this config?

I see you have eports defined and referenced but where des the second nic get defined?

Not trying to be a jerk just need a little clarification, Thanks for all your hard work.

We are using a wilcard:

>name: enp*

The ports are defined here:

>interfaces: [eports]

I tried the above steps however it is not working for me.

When I see the bonding status it is working fine for me(cat /proc/net/bonding/bond0)

However, after reboot it is not working for me and I am unable to connect to the server.

Is the bonding module loaded after a reboot?

Thanks for your update.

How can I check/load the bonding module?

You can check it with those commands:

sudo lsmod | grep 8021q

Thanks for your response.

Yes, I have loaded the bonding modules after the reboot. However, When I manually unplug the primary ethernet connection(eX:enp0s3) I am unable to connect/ping the server. If I unplug another ethernet connection manually it is working fine for me. Kindly once see the below file and let me know if it requires any modifications.

# interfaces(5) file used by ifup(8) and ifdown(8)

auto lo

iface lo inet loopback

# eth0 is manually configured, and slave to the “bond0” bonded NIC

auto enp0s3

iface enp0s3 inet manual

bond-master bond0

bond-primary enp0s3

# eth1 ditto, thus creating a 2-link bond.

auto enp0s8

iface enp0s8 inet manual

bond-master bond0

# bond0 is the bonding NIC and can be used like any other normal NIC.

# bond0 is configured using static network information.

auto bond0

iface bond0 inet static

address 192.168.0.7

gateway 192.168.0.1

netmask 255.255.255.0

bond-mode 1

bond-miimon 100

bond-slaves none

# bond-lacp-rate 1

# bond-slaves enp0s3 enp0s8

Is this a configuration with netplay does not seems like a yaml configuration. Did you check our Ubuntu 16.04 knowledge base article ?

Above configuration, I did in the oracle virtual VM.

kindly let me know, If I restart the server multiple times with bonding changes, will it be an effect on the bonding?

Please correct me if I am wrong, I think due to the network cache I am getting the different results of bonding after every immediate restart.

There is a typo in eports – should be exports.

Thanks for the blog article 🙂

Do you mean the eports in the netplan configuration?

why does one need to reboot?

what is the point of this daemon if we need to reboot to activate or test something that could be configured on the fly more than a decade before the daemon was designed?

Rebooting the server is not necessary but in our article, we do this. Just to be sure that everything is working after a reboot.

DEEPAK RAJPUT says

HOW TO ADD BONDING COMMAND IN KERNEL MODULE

Did you follow step 2 & 3, that should be enough to load the kernel modules.

Syed Tayyab Asghar says

Hi,

Why partner/actor churn stats showed churned any one can tell me ? due to that port-channel at switch showing down.

Make sure the switch is configured correctly and that the cabling is correct.

Network Interface Bonding is a mechanism used in Linux servers which consists of binding more physical network interfaces in order to provide more bandwidth than a single interface can provide or provide link redundancy in case of a cable failure. This type of link redundancy has multiple names in Linux, such as Bonding, Teaming or Link Aggregation Groups (LAG).

To use network bonding mechanism in Ubuntu or Debian based Linux systems, first you need to install the bonding kernel module and test if the bonding driver is loaded via modprobe command.

Check Network Bonding in Ubuntu

On older releases of Debian or Ubuntu you should install ifenslave package by issuing the below command.

To create a bond interface composed of the first two physical NCs in your system, issue the below command. However this method of creating bond interface is ephemeral and does not survive system reboot.

To create a permanent bond interface in mode 0 type, use the method to manually edit interfaces configuration file, as shown in the below excerpt.

Configure Bonding in Ubuntu

In order to activate the bond interface, either restart network service, bring down the physical interface and rise the bond interface or reboot the machine in order for the kernel to pick-up the new bond interface.

The bond interface settings can be inspected by issuing the below commands.

Verify Bond Interface in Ubuntu

Details about the bond interface can be obtained by displaying the content of the below kernel file using cat command as shown.

Check Bonding Information in Ubuntu

To investigate other bond interface messages or to debug the state of the bond physical NICS, issue the below commands.

Check Bond Interface Messages

Next use mii-tool tool to check Network Interface Controller (NIC) parameters as shown.

Check Bond Interface Link

The types of Network Bonding are listed below.

- mode=0 (balance-rr)

- mode=1 (active-backup)

- mode=2 (balance-xor)

- mode=3 (broadcast)

- mode=4 (802.3ad)

- mode=5 (balance-tlb)

- mode=6 (balance-alb)

The full documentations regarding NIC bonding can be found at Linux kernel doc pages.

If You Appreciate What We Do Here On TecMint, You Should Consider:

TecMint is the fastest growing and most trusted community site for any kind of Linux Articles, Guides and Books on the web. Millions of people visit TecMint! to search or browse the thousands of published articles available FREELY to all.

If you like what you are reading, please consider buying us a coffee ( or 2 ) as a token of appreciation.

We are thankful for your never ending support.

After installing Ubuntu 18.04/20.04 you can use the following guide to configure LACP on the server.

Before we start:

It’s very convenient to be root while configuring the network on a server, in order to become root on ubuntu we recommend running sudo -i (for local tasks, sudo -e if you may be connecting to another server).

For LACP to work, LACP also has to be configured on the switch, we will inform you if this is the case.

Step 1 – Finding the interfaces

Run the following command: ip link

You will see a list of interfaces, you can ignore the lo interface, if that only leaves two interfaces, those interfaces have to be configured. If more than two interfaces remain LACP should usually be configured on the first two interfaces.

Step 2 – Creating the configuration

On Ubuntu 18.04 after installation the network configuration file will be located in: /etc/netplan/01-netcfg.yaml

Use your preffered editor to edit this file (example: nano /etc/netplan/01-netcfg.yaml ).

Create the configuration, example:

About the configuration:

- You should replace eno1 and eno2 with the interfaces you want to make members, you can also define more interfaces and have more than two intefaces in the bond, you do need to turn off dhcp4 on all of them.

- You should replace the IP address and gateway with your information , you can find this information in our customer portal if this is managed by us.

- YAML is very sensitive to incorrect indentiation. For this reason, we recommend using 2 spaces per indent level (do not use tabs /!\), this makes it easy to find the correct indentation for a line.

- You can replace the addresses of the nameservers ( 8.8.8.8 , 9.9.9.9 ) with your preffered DNS resolvers.

- For optimal performance, it is very important to include transmit-hash-policy: layer3+4 in the configuration file. This setting affects load-balancing, by default this is set to layer2 , this means all traffic to the same mac-address (which our router will always have) will egress over the same interface, limiting performance. Setting it to layer3+4 will split traffic based on the src/dst IP and src/dst port, which results in much better load-balancing.

Step 3 – Applying the configuration

To apply the configuration we just created we need to run: netplan apply . For configurations without bonds, you can also use netplan try , it will start a timer, and if the user does not supply any input in the terminal (lost connection) the configuration will be rolled back.

Step 4 – Checking the configuration

In order to make sure the previous steps have achieved the desired result there are a few things you can do in order to verify the configuration:

- Run the ip link command (again). The output should show a new interface: bond0 should now also be there. If you replace link with address to get ip address you should also see the IP address you entered appear in the output of this command. Example:

- Run cat /proc/net/bonding/bond0 , this will show a lot of information about the bond0 interface. Here are some of the key lines and what they mean:

- Bonding Mode: IEEE 802.3ad Dynamic link aggregation

- Shows the bond is configured with 802.3ad (LACP), this way it negotiates with our switch.

- Transmit Hash Policy: layer3+4 (1)

- Shows the bond hashing mode is set to layer3+4, this means it’s configured properly in our case.

- You should also see a (system mac address) mac-address in the Slave interface sections at the details partner lacp pdu.

- Bonding Mode: IEEE 802.3ad Dynamic link aggregation

- run ping 8.8.8.8 , or another IP which has a low chance of being offline. This will confirm internet connectivity, by default the firewall will allow this type of outbound traffic. If you notice missing icmp_seq numbers, there may be packet loss, please contact our support about this.

- You can also check the interface using ethtool bond0 , you will see the Speed of the interface in there, this should match the sum of the two interfaces configured, example: Speed: 20000Mb/s

Pro Tip:

Our network has been configured to accept connections regardless of if LACP has been set up on the server, this means you can temporarily set up an IP address on your server so you can copy the information from this tutorial, edit it in your text editor and then paste it on the server, this decreases the chance of typos 🙂

You can quickly set up the network for SSH by running the following commands (replace the IP information with the information for your server)

In case you are still running into issues, feel free to contact our support about this!

то, что не поместилось на

- Главная страница

- Страйкбол

- WGC Custom M5 RAS

- VFC AKC-74

- AKC-74 (тюнинг)

- EUSP от Dowble Eagle

- M4A1 от G&P

- M4A1 от G&P (тюнинг)

- Marui HI-CAPA 5.1

- «Z-M WEAPONS» LR-300

- Прошло через руки

- CYMA AK47 (CM048M)

- King Arms Dragunov SVD

- DBoys M16-SPR

- DBoys Long Barrel M203

- Ножи

- Нож «Белый медведь»

- Нож «Егерь»

- Bear Grylls Survival Bowie G10

- Снаряжение

- Одежда и экипировка

- Барахолка

- Linux и его братья

- О редакторе «VI»

- DNS+DHCP сервер на Ubuntu

- Работа Wi-fi на чипсете Atheros AR9285

- Xранилище DNS-323 и Ubuntu. NFS

- Пульт Aureal с DX-а, Ubuntu 10.10 и XBMC

- Просмотр информации SMART ваших винчестеров

- О оконном менеджере «screen»

- Установка и настройка NFS сервера в Ubuntu

- Cвободное и занимаемое место на диске в консоли

- Как передвинуть значки управления окном в ubuntu слева направо

- Мониторинг из консоли

- О «Midnight Commander»

- Объединение сетeвых интерфейсов в Ubuntu при помощи «bonding»

- Настройка Ubuntu desktop и удаленного доступа

- Cron в Ubuntu

- Настройка устойств в ubuntu

- Включаем IP Forwarding в Linux

- Шпаргалка по libvirt

- Разное

- Доработка Cisco 7940 для работы с IEEE 802.3af

- Network 1080P Media Player

- Уход за аккумуляторами

- Зарядка/разрядка Ni-Cd

- Зарядка/разрядка Ni-Mh

- Thunderbird и его конфиги

- Volkswagen Polo Sedan

- Volkswagen Polo Sedan. Боковые молдинги

- Volkswagen Vento GT

- Фотогалерея

- Желания

- Обратная связь

Объединение сетeвых интерфейсов в Ubuntu при помощи «bonding»

В статье рассказано, как объединить два физических сетевых интерфейса в один для увеличения пропускной способности или для повышения отказоустойчивости

сети. В Linux это делается при помощи модуля bonding и утилиты ifenslave. В большинстве новых версий дистрибутивов модуль ядра bonding уже есть и готов к использованию, в некоторых вам придется собрать его вручную.

Установим нужное ПО:

Затем добавим модуль bonding в автозагрузку и пропишем опции для его запуска, для этого в конец файла /etc/modules добавим текст, следуя логике примеров, приведенных ниже.

Пример для одного виртуального интерфейса из двух физических:

Пример для создания двух интерфейсов из четырех физических:

Подробнее о режимах работы bonding

Круговой, циклически использует физические интерфейсы для передачи пакетов. Рекомендован для включения «по умолчанию». Этот режим работает с максимальной отдачей

mode = 1 (active-backup)

Работает только один интерфейс, остальные находятся в очереди горячей замены. Если ведущий интерфейс перестает функционировать, то его нагрузку подхватывает следующий (присвоив mac-адрес) и становится активным. Дополнительная настройка коммутатора не требуется.

mode = 2 (balance-xor)

XOR политика: Передача на основе [(исходный MAC-адрес → XOR → MAC-адрес получателя) %число интерфейсов]. Эта команда выбирает для каждого получателя определенный интерфейс в соответствии с mac-адресом. Режим обеспечивает балансировку нагрузки и отказоустойчивость.

mode = 3 (broadcast)

Все пакеты передаются на все интерфейсы в группе. Режим обеспечивает отказоустойчивость.

IEEE 802.3ad Dynamic Link aggregation (динамическое объединение каналов). Создает агрегации групп, имеющие одни и те же скорости и дуплексные настройки. Использует все включенные интерфейсы в активном агрегаторе согласно спецификации 802.3ad.

Предварительные реквизиты

Поддержка ethtool (позволяет отображать или изменять настройки сетевой карты) базы драйверов для получения скорости и дуплекса каждого интерфейса.

Коммутатор с поддержкой IEEE 802.3ad Dynamic Link aggregation. Большинство параметров потребует некоторой конфигурации для режима 802.3ad.

mode =5 (balance-tlb)

Адаптивная балансировка передаваемой нагрузки: канал связи не требует какой либо специальной настройки. Исходящий трафик распределяется в соответствии с текущей нагрузкой (вычисляется по скоростям) для каждого интерфейса. Входящий трафик принимается текущим интерфейсом. Если принимающий интерфейс выходит из строя, то следующий занимает его место приватизировав его mac-адрес.

Поддержка ethtool (позволяет отображать или изменять настройки сетевой карты) базы драйверов для получения скорости и дуплекса каждого интерфейса.

mode = 6 (balance-alb)

Адаптивное перераспределение нагрузки: включает balance-tlb плюс receive load balancing (rlb) для трафика IPv4 и не требует специального конфигурирования. То есть все так же как и при mode =5, только и входящий трафик балансируется между интерфейсами. Полученная балансировка нагрузки достигается опросом ARP. Драйвер перехватывает ответы ARP, направленные в локальной системе в поисках выхода и перезаписывает исходный адрес сетевой карты с уникальным аппаратным адресом одного из интерфейсов в группе.

Руками попробуем загрузить модуль bonding

The practice of merging different network interfaces into one is known as network bonding or pairing. The main goal of network binding is to enhance performance and capacity while also ensuring network redundancy. Furthermore, network bonding is advantageous where fault allowances are a crucial consideration, such as in load balancing connections. Packages for network bonding are available in the Linux system. Let’s have a look at how to set up a network connection in Ubuntu using the console. Before you start, make sure you have the following items:

- An administrative or master user account

- There are two or more interface adapters available.

Install the bonding module in Ubuntu

We need to install the bonding module first. Hence log in from your system and open the command-line shell quickly by “Ctrl+Alt+T”. Make sure to have the bonding module configured and enabled in your Linux system. To load the bonding module type the below command followed by the user password.

The bonding has been enabled as per the below query:

If your system has missed the bonding, make sure to install the ifenslave package in your system via the apt package followed by adding the password.

Affirm your installation action process by hitting “y” from the typewriter. Otherwise, press “n” to quit the installation.

You can see the system has successfully installed and enabled network bonding on your system as per the below last lines of output.

Temporary Network Bonding

Temporary bonding is only lasting until the next reboot. This means when you reboot or restart your system it fades away. Let’s begin the temporary bonding. First of all, we need to check how many interfaces are available in our system to be bonded. For this purpose, write out the below command in the shell to check it out. Add your account password to proceed. The output below shows that we have two Ethernet interfaces enp0s3 and enp0s8 available in the system.

Advertisement

First of all, you need to change the state of both the Ethernet interfaces to “down” using the following commands:

Now, you have to make a bond network on master node bond0 via the ip link command as below. Make sure to use bond mode as “82.3ad”.

After the bond network bond creation, add both the interfaces to the master node as below.

You can affirm the creation of network bonding using the below query.

Permanent Network Bonding

If somebody wants to make a permanent networking bonding, they have to make changes to the configuration file of network interfaces. Hence, open the file in GNU nano editor as below.

Now update the file with the below following configuration. Make sure to add bond_mode as 4 or 0. Save the file and quit it.

To enable the network bond, we need to change the states of both slaves interfaces to down and change the state of the master node to up, using the below query.

Now restart the network service using the below systemctl command.

You can also use the below command instead of the above command.

Now you can confirm whether the master interface has been “up” or not using the below query:

You can check out the status of a newly created network bond that has been created successfully by utilizing the below query.

Conclusion

This article explains how to combine several network interfaces into a single platform using the Linux bridging package. Hope you got no issues while implementation.

- в†ђ How to Install Portainer Docker Manager in Ubuntu 20.04

- OpenShift vs Kubernetes – Container deployment platform comparison →

Karim Buzdar

About the Author: Karim Buzdar holds a degree in telecommunication engineering and holds several sysadmin certifications. As an IT engineer and technical author, he writes for various web sites. You can reach Karim on LinkedIn

The Linux kernel provides us with modules to perform network bonding. This tutorial discusses how to use the Linux bonding module to connect multiple network interfaces into a single interface.

Before we dive into the terminal and enable network bonding, let us discuss key concepts in network bonding.

Types Of Network Bonding

There are six types of network bonding. They are:

- mode=0 – This is the default bonding type. It is based on the Round-Robin policy (from the first interface to the last) and provides fault tolerance and load balancing features.

- mode=1 – This type of bonding is based on the Active-Backup policy (only a single interface is active, and until it fails, the other activates). This mode can provide fault tolerance.

- mode=2 – This type of bonding provides features such as load balancing and fault tolerance. It sets an XOR mode performing an XOR operation of the source MAC address with the destination MAC address.

- mode=3 – Mode 3 is based on broadcast policy, transmitting all packets to all interfaces. This mode is not a typical bonding mode and applies to specific instances only.

- mode=4 – Mode 4 or Dynamic Link Aggregation mode create aggregation groups with the same speed. Interface selection for outgoing traffic is carried out based on the transmit hashing method. You can modify the hashing method from XOR using the xmit_hash_policy. It requires a switch with 802.3ad dynamic link

- mode=5 – In this mode, the current load on each interface determines the distribution of the outgoing packets. The current interface receives the incoming packets. If the current interface does not receive the incoming packets, it is replaced by the MAC address of another interface. It is also known as Adaptive transmission load balancing.

- mode=6 – This type of balancing is also known as Adaptive load balancing. It has a balance-transmit load balancing and a receive-load balancing. The receiving-load balancing uses ARP negotiation. The network bonding driver intercepts the ARP replies from the local device and overwrites the source address with a unique address of one of the interfaces in the bond. This mode does not require switch support.

How to Configure Network Bonding on Ubuntu

Let us dive into the terminal and configure network bonding in ubuntu. Before we begin, ensure you have:

- A root or a sudo user account

- Two or more network interfaces

Install Bonding module

Ensure you have the bonding module installed and enabled in your kernel. Use the lsmod command as:

sudo lsmod | grep bonding

bonding 180224 1

If the module is unavailable, use the command below to install.

Ephemeral Bonding

You can set up a temporary network bonding using two interfaces in your system. To do this, start by loading the bonding driver.

In the next step, let us get the names of the ethernet interfaces in our systems. Use the command:

The above command shows the interfaces in the system. You can find an example output in the image below:

Now, let us create a network bond using the ip command as:

sudo ifconfig ens33 down

sudo ifconfig ens36 down

sudo ip link add bond0 type bond mode 802.3ad

Finally, add the two interfaces:

sudo ip link set ens33 master bond0

sudo ip link set ens36 master bond0

To confirm the successful creation of the bond, use the command:

NOTE: Creating a bond, as shown above, will not survive a reboot.

Permanent Bonding

We need to edit the interface configuration file and add the bonding settings to create a permanent bond.

In the file, add the following configuration.

iface ens33 inet manual

iface ens36 inet manual

iface bond inet static

slaves ens33 ens36

NOTE: Ensure that the interfaces are bond=4 compliant. If not, you can use bond=0 instead. You may also need to take the two interfaces down and enable the bond.

Use the command below to activate the bond.

sudo ifconfig ens33 down && sudo ifconfig ens36 down & sudo ifconfig bond0 up

sudo service restart network-manager

To confirm the interface is up and running, use the command:

To view the status of the bond, use the command as:

Here is an example output:

In Closing

This guide walked you through how to set up network bonding in Ubuntu and Debian-based distributions. To get detailed information about bonding, consider the documentation.

About the author

John Otieno

My name is John and am a fellow geek like you. I am passionate about all things computers from Hardware, Operating systems to Programming. My dream is to share my knowledge with the world and help out fellow geeks. Follow my content by subscribing to LinuxHint mailing list

Messing about in the lab configuring 802.3ad LACP bundled interfaces between switches and I wanted to see how easy (or hard) it would be to create a bonded interface on a server. I’ve got an Ubuntu 14.04LTS VM and 3 NICs available, so eth1 and eth2 were told they will become one 😀

Let’s get cracking!

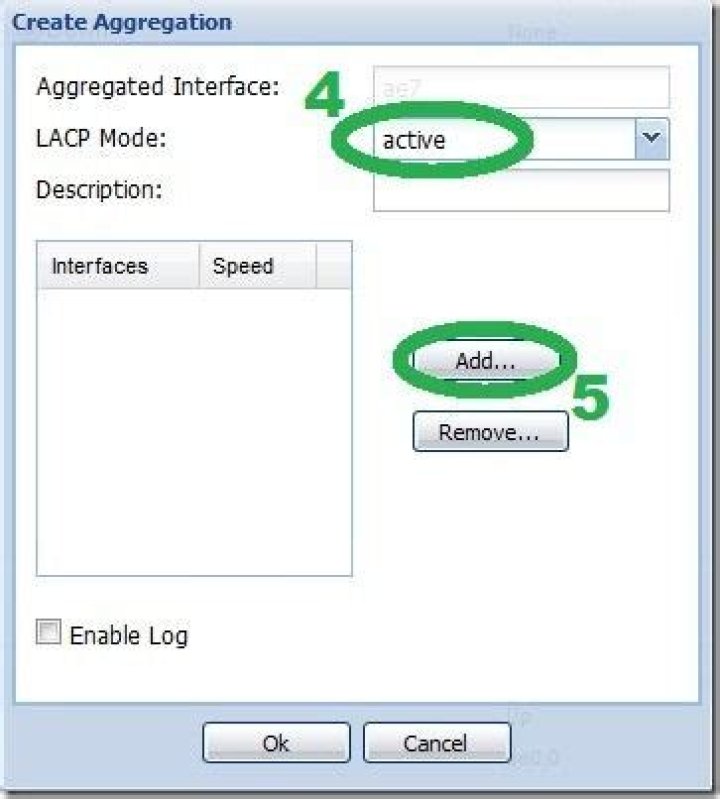

Firstly, I configured the switch as 802.3ad LACP aggregated interface and set the interfaces to apart of the aggregated interface:

Server wise, check that the NICs can be configured as an 802.3ad bond, as when I’m using LACP method of bonding, you need to ensure that the NICs support ethtool.

By running ethtool , if a link is detected then you’re good to go:

I needed to install ifenslave package, as this package is used to attach and detach NICs to a bonding interface

Once that has been installed, the kernel module file needs to be edited to include bonding before creating a bonded interface:

Once that is saved, manually load the module:

Next edit the interfaces into a bond sudo nano /etc/network/interfaces

- Mode 0 – balance-rr

- Mode 1 – active-backup

- Mode 2 – balance-xor

- Mode 3 – broadcast

- Mode 4 – 802.3ad

- Mode 5 – balance-tlb

- Mode 6 – balance-alb

For more in-depth details on bonding modes and Linux Ethernet Bonding visit Kernel.org white paper documentation

Save and Exit, then you need to do network restart or reboot the server for the change to take effect.

Once the reboot/restart has completed you should be sorted. You can check this by running the commands ifconfig

or cat /proc/net/bonding/bond0

By using cat /proc/net/bonding/bond0 you can also check if a link in the bond has failed as the Link Failure Count would increase.

And thats how you can configure 802.3ad Bonded Interface 🙂

What is Bonding?

It is the process of joining multiple ethernet interfaces. Network teaming can also be called. In other words, if more than one network card acts as a single network card, if one of the cards is disconnected, it is the process of continuous connection over the other card.

What is LACP?

Ether Channel is performed with two protocols. The first is the cisco protocol PAgP (Port Aggregation Protocol) and the second is the LACP (Link Aggregation Control Protocol) standard protocol with IEEE 802.3ad. Ether Channel is a protocol that allows the switch to detect two or more cables as a single cable when two or more cables are connected between two switches. Ether Channel provides both redundancy, loadbalance, and high bandwidth.

Bonding MODs

mode = 0 >> Round-robin (balance-rr) sends packets to the interfaces, respectively.

mode = 1 >> Active-backup works. Only one interface is active.

mode = 2 >> [(Source MAC address XOR destination MAC address)% interfaces] Sends packets according to the balance-xor algorithm.

mode = 3 >> Broadcast type. It sends all packets from all interfaces.

mode = 4 >> IEEE 802.3ad Dynamic link aggregation (802.3ad), LACP. Active-active works.

mode = 5 >> The total load is shared according to the load of each interface (balance-tlb).

mode = 6 >> Adaptive load balancing mode (balance-alb).

Our example scenario is as follows,

NIC-Bonding Example

1- Bonding with LACP configuration,

Now configure the inside of the file “ /etc/network/interfaces ” as follows. You can edit by your own nic card names.

A programming skills sharing group

- Home

- Articles

- Keywords

1. Working mode of Bond

Linux binding driver can bind multiple network cards into a logical network card for network load balancing and network redundancy.

bonding has seven working mode s:

1) , mode=0, (balance RR) (polling equalization mode)

Data packets are transmitted in sequence until the last transmission is completed. This mode provides load balancing and fault tolerance. The server has only one MAC address, and its physical network card has no MAC address. Therefore, the switch needs to do link aggregation, otherwise it does not know which network card to send the data packet to

2) , mode=1, (active backup) (active standby mode)

Only one master is active. One goes down and the other is immediately converted from backup to primary device. The mac address is externally visible. This mode provides fault tolerance. No special configuration of the switch is required. Just configure the access port.

3) , mode=2, (balance XOR) (balance strategy)

Features: transmit packets based on the specified HASH policy. This mode provides load balancing and fault tolerance.

4) , mode=3, (broadcast) (broadcast strategy)

Features: each data packet is transmitted on each network card. There are too many broadcast packets. Generally, this mode is not used. Transfer all packets to all devices. This mode provides fault tolerance.

5) , mode=4, (802.3ad) IEEE 802.3ad dynamic link aggregation

Create an aggregation group that shares the same speed and duplex settings. This mode provides fault tolerance. Each device needs driver based re acquisition speed and full duplex support; If a switch is used, the switch also needs to enable 802.3ad mode.

6) , mode=5, (balance TLB) adaptive transmit load balancing

Features: no special switch is required to support channel bonding. Distribute outgoing traffic on each network card according to the current load (calculated according to speed). If the network card receiving data fails, another network card takes over the MAC address of the failed network card. The MAC of multiple network cards can be seen on the switch, so the switch does not need to be configured for link aggregation.

7) , mode=6, (balance ALB) adaptive load balancing:

Features: this mode includes the balance TLB mode, plus the receive load balance (RLB) for IPV4 traffic, and does not need any switch support. The MAC of multiple network cards can also be seen on the switch, and link aggregation configuration is not required.

The following is the implementation principle of mode=6

1.bond receiving load balancing is realized through ARP protocol. The binding driver intercepts the ARP response sent by the local machine and rewrites the source MAC address into the MAC address of a network card in the bond, so that the ARP learned on the switch is the MAC address that the server wants to allocate traffic, and the traffic sent to the server will be sent to the corresponding mac address.

2.bond transmission load balancing is also realized through ARP protocol. When the server initiates an ARP request, the binding driver copies and saves the IP information of the opposite end from the ARP packet. When the ARP response arrives from the opposite end, the binding driver extracts its MAC address and forges an ARP response to a network card in the bond, so as to send data packets from the specified network card.

3. When a network card fails or ARP expires, bond will recalculate and redistribute the traffic to each network card.

2. Configuration steps

The following steps are the binding steps of configuring dual network cards with mode=6, and the methods of other modes are the same;

2.1. Install ifenslave software

fenslave is a kind of glued and separated software, which can effectively allocate data packets to binding drivers

2.2. / etc/modules file

Add the following configuration in the / etc/modules file

mode=6 means mode 6 is adopted;

Miimon is used for link monitoring. For example, miimon=100 means that the system monitors the link connection status every 100ms. If one line fails, switch to another line.

2.3. Modify / etc/network/interfaces file configuration

First, you need to determine the name of the network card interface. You can view it through the ifconfig command. The current network card interfaces are ens33 and ens34 respectively; Add the following configuration in the / etc/network/interfaces File

2.4. Loading the binding module

2.5. Viewing status

View network configuration

Viewing binding status

2.6 verification test

Physically close a network card (unplug the network cable)

Note: you cannot use ifdown to turn off the network card, which will cause network interruption

3. Summary

Linux bonding has seven working modes. If you want to increase the throughput of the network card, you usually use the mode of mode=6. If you pay attention to the stability of the network, you usually use the mode of mode=1

4. Reference articles

Ubuntu configures dual network card binding to achieve load balancing (example code):

Linux multi network card bond mode VS switch link aggregation:

linux multi network card binding aggregation bond technology:

Posted by cmay4 on Fri, 08 Oct 2021 03:53:25 -0700

I am running into some issues configuring netplan on Ubuntu 18.04 server to bond my four hardware ethernet ports named eno1, eno2, eno3, eno4 using the 802.3ad protocol. I’ve consulted the netplan man page and put together the following config file /etc/netplan/50-cloud-init.yaml :

Upon running the command sudo netplan –debug apply I receive the following information:

I’m not sure what to make of the statement

since the directory /sys/class/net/bond0 was generated by the netplan apply command.

I checked my ifconfig output and my network devices seem to be configured correctly with the exception that no address is set for bond0 :

The ether XX:XX:XX:XX:XX:XX statements are in place of each interfaces’s mac address. In the original output, all addresses are the same.

What am I missing to successfully configure my system?

2 Answers 2

After some digging, I discovered that Ubuntu 18.04 uses a utility called cloud-init to handle network configuration and initialization during the boot sequence. The file /etc/cloud/cloud.cfg.d/50-curtin-networking.cfg and other .cfg files are used to reconfigure cloud-init settings. My config file settings are as follows:

The optional: true parameter prevents the system from waiting for a valid network connection at boot time which will save you the hassle of waiting 2 minutes for your machine to boot. After updating the config file run the following command to update your configuration.

Alternatively running the following allows for some debug information without rebooting your machine; however, a reboot will be required to commit the changes during early boot stages.

Network bonding is the aggregation or combination of multiple LAN cards into a single bonded interface to provide high availability and redundancy. Network bonding is also known as NIC teaming.

In this article, we will discuss how to configure network bonding in Ubuntu 14.04 LTS Server. In my scenario, I have two Lan Cards: eth0 & eth1 and will create a bond interface bond0 with active-passive or active-backup mode.

Step 1: Install bonding Kernel module using below command.

Step 2: Load the kernel module.

Edit the file /etc/modules and add the bonding module at the end.

Save & exit the file.

Now load the module using modprobe command as shown below:

Step 3: Edit interface config file.

Step 4: Restart the networking service & see the bond interface status.

Verify the bond interface using below command:

We can also use ifconfig command to see the bond interface.

Now check the bond interface status using below command:

Note: To do the testing we can down one interface and access the server and see the bond status.

Different Modes Used in Network Bonding are listed below:

- balance-rr or 0 — round-robin mode for fault tolerance and load balancing.

- active-backup or 1 — Sets active-backup mode for fault tolerance.

- balance-xor or 2 — Sets an XOR (exclusive-or) mode for fault tolerance and load balancing.

- broadcast or 3 — Sets a broadcast mode for fault tolerance. All transmissions are sent on all slave interfaces.

- 802.3ad or 4 — Sets an IEEE 802.3ad dynamic link aggregation mode. Creates aggregation groups that share the same speed & duplex settings.

- balance-tlb or 5 — Sets a Transmit Load Balancing (TLB) mode for fault tolerance & load balancing.

- balance-alb or 6 — Sets an Active Load Balancing (ALB) mode for fault tolerance & load balancing.

It’s quite common to see in a network environment that multiple interfaces are connected to the network devices for redundancy.

In the event of one of the links ever goes down, the remaining links would take care of the traffic.

Bonding with LACP on netplan

Bonding is a way to club multiple interfaces as one and get maximum bandwidth. There are multiple protocols that help you create bonding, one among them is the industry standard known as LACP. And we are going to configure bonding using LACP with Netplan on Ubuntu.

If you are from a networking background, the bond interface usually calls it port-channel, bridge aggregation, link aggregation. However, when it comes to the server side we call it bonding or bond interface. In the end, it is the same thing.

Below is what my lab physical connectivity looks like.

The Ubuntu box is connected to a Layer3 switch with the SIX interfaces.

For all those SIX interfaces, I am going to club all of them as Bond0 with an IP address, this interface is an untagged port (access port), and configured with port-channel on the switch side.

Later we will take look at a tagged configuration using a specific VLAN.

Note: Switch configuration not covered here.

In /etc/netplan edit the YAML file as below.

Note: Before you perform any change, make sure you backup the configuration.

Step 1. Group the interface.

First, you need to group all the interfaces as one, since I have the interface name started with ens, I grouped them as ens*. Which basically says any interface start with the name ens.

Step 2. Configure the Bond interface.

Then define the bond interface and call the interface group eports that you have just created and configure the IP address on it as well.

Step 3. Add the LACP configuration.

After that add the LACP configurations, LACP is the standard bonding protocol.

- The final netplan configuration would look like below.

Step 4. Apply the configuration.

Save the configuration and apply the configuration that you have just made using the command below.

Step 5. Verification.

In the Bond interface, there will be slaves and a master, the physical interfaces who are part of the logical bond interface will be the slave, and the bond interface will be master.

Type IP addr, you should be able to see the physical interface has become SLAVE and it is up now (

).

When we look into the status of the Bond0 interface you can see, it has become master and it is up as well, also got an IP address 10.1.1.10, which is good!.

- Lets ping the default gateway to make sure that the connectivity is okay.

We consider creating a Bond0 interface which will do a bonding from the two following physical interface : em2 and em3.

Different Bonding mode can be applied (this depends of the Switch capability).

- Mode 0 (balance-rr): This mode is also known as round-robin mode. Packets are sequentially transmitted and received through each interface one by one. This mode provides load balancing functionality.

- Mode 1 (active-backup): This mode has only one interface set to active, while all other interfaces are in the backup state. If the active interface fails, a backup interface replaces it as the only active interface in the bond. The media access control (MAC) address of the bond interface in mode 1 is visible on only one port (the network adapter), which prevents confusion for the switch. Mode 1 provides fault tolerance.

- Mode 2 (balance-xor): The source MAC address uses exclusive or (XOR) logic with the destination MAC address. This calculation ensures that the same slave interface is selected for each destination MAC address. Mode 2 provides fault tolerance and load balancing.

- Mode 3 (broadcast): All transmissions are sent to all the slaves. This mode provides fault tolerance.

- Mode 4 (802.3ad): This mode creates aggregation groups that share the same speed and duplex settings, and it requires a switch that supports an IEEE 802.3ad dynamic link. Mode 4 uses all interfaces in the active aggregation group. For example, you can aggregate three 1 GB per second (GBPS) ports into a 3 GBPS trunk port. This is equivalent to having one interface with 3 GBPS speed. It provides fault tolerance and load balancing.

- Mode 5 (balance-tlb): This mode ensures that the outgoing traffic distribution is set according to the load on each interface and that the current interface receives all the incoming traffic. If the assigned interface fails to receive traffic, another interface is assigned to the receiving role. It provides fault tolerance and load balancing.

- Mode 6 (balance-alb): This mode is supported only in x86 environments. The receiving packets are load balanced through Address Resolution Protocol (ARP) negotiation. This mode provides fault tolerance and load balancing.

Description

Backup the existing interfaces that you plan to configure as bond slaves by using the following commands:

Hardware Version : Not Clear

I am going to configure an LACP bonding Mode 4 – Dynamic link aggregation bonding on my Ubuntu Server 14.04.3 LTS 64-bit box. My switch TP-Link TL-SG3424 allows for LACP bonding via IEEE 802.3ad protocol. What makes my headaches are the two NICs I have on my motherboard integrated. One of them proves itself to be model Marvell 88E8056 PCI-E Gigabit Ethernet Controller, the other one Marvell 88E8001 Gigabit Ethernet Controller. No bonding has been achieved with them. I succeeded just with Mode 1 (active-backup) that works perfect. This is why I started searching for a TP-Link NICs that would meet the requirements, i.e. they support IEEE 802.3ad protocol.

My dealer has offered two TL-SG3424 Revision 3.2 network adapters. I am not sure if they are OK for my configuration. On the TP-Link web site I found the specification of the Gigabit PCI Network Adapter TG-SG3424 Revision 2.1/2.2 that showed the following protocols: IEEE 802.3, 802.3u, 802.3ab, 802.3x, 802.1q, 802.1p, however no IEEE 802.3ad protocol that is supposed to be implemented when configuring the NIC bonding Mode 4 – Dynamic link aggregation. The two cards from my dealer are Revision 3.2, however. In comparison to the web site specification they have a different revision number, but I do not know the exact specification. Hence my questions to somebody among you willing enough to help:

[*]Is there a crucial difference between the Revision 2.1/2.2 specs shown on the website and the unknown specs of the same card model with Revision 3.2?

[*]Does the NIC model TG-SG3424 Revision 3.2 support the IEEC 802.3ad Protocol?

[*]If not, can somebody recommend a different model of a TP-Link NIC that would comply with the requirements for NIC bonding Mode 4 – Dynamic link aggregation?

Any help would be highly appreciated. Many thanks for your nice support in advance.

Introduction

NIC teaming presents an interesting solution to redundancy and high availability in the server/workstation computing realms. With the ability to have multiple network interface cards, an administrator can become creative in how a particular server accessed or create a larger pipe for traffic to flow through to the particular server.

This guide will walk through teaming of two network interface cards on a Debian 11 system. we will be using the The ifenslave software to attach and detach NICs from a bonded device.

The first thing to do before any configurations, is to determine the type of bonding that the system actually needs to implemented. There are six bonding modes supported by the Linux kernel as of this writing. Some of these bond ‘modes‘ are simple to setup and others require special configurations on the switches in which the links connect.

Understanding the Bond Modes :

1- Update and upgrade

Log in root and type the update and upgrade commands:

So in this case, we will be using Debian 11.

2- Install ifenslave package

The second step to this process is to obtain the proper software from the repositories. The software for Debian is ifenslave and can_be installed with apt

3- Load the kernel module

Once the software installed, the kernel will need to_be told to load the bonding module both for this current installation as well as on future reboots.

4- Create the bonded interface

Now that the kernel made aware of the necessary modules for NIC bonding, it is time to create the actual bonded interface. This is done through the interfaces file which is located at ‘ /etc/network/interfaces ‘

This file contains the network interface settings for all of the network devices the system has connected. This example has two network cards (eth0 and eth1).

In this file, the appropriate bond interface to enslave the two physical network cards into one logical interface shouldbe created .

The ‘ bond-mode 1 ‘ is what is used to determine which bond mode is used by this particular bonded interface. In this instance bond-mode 1 indicates that this bond is an active-backup setup with the option ‘ bond-primary ‘ indicating the primary interface for the bond to use. ‘ slaves eth0 eth1 ‘ states which physical interfaces are part of this particular bonded interface.

In addition, the next couple of lines are important for determining when the bond should switch from the primary interface to one of the slave interfaces in the event of a link failure. Miimon is one of the options available for monitoring the status of bond links with the other option being the usage of arp requests.

This guide will use miimon. ‘ bond-miimon 100 ‘ tells the kernel to inspect the link every 100 ms. ‘ bond-downdelay 400 ‘ means that the system will wait 400 ms before concluding that the currently active interface is indeed down.

The ‘ bond-updelay 800 ‘ is used to tell the system to wait on using the new active interface until 800 ms after the link is brought up. most importantly, updelay and downdelay, both of these values must be multiples of the miimon value otherwise the system will round down.

5- Bring up the bonded interface

- ifdown eth0 eth1 – This will bring both network interfaces down.

- ifup bond0 – This will tell the system to bring the bond0 interface on-line and subsequently also bring up eth0 and eth1 as slaves to the bond0 interfaces.

As long as all goes according to plan the system should bring eth0 and eth1 down and then bring up bond0. by bringing up bond0, eth0 and eth1 will_be reactivated and made for being_ members of the active-backup NIC team created in the interfaces file earlier.

6- Check the bonded interface status

7- Testing the Bond Configuration

We will disconnect the eth0 interface to see what happens

Originally the bond was using eth0 as the primary interface but when the network cable disconnected, the bond had to determine the link was indeed down, then wait the configured 400 ms to completely disable the interface, and then bring one of the other slave interfaces up to handle traffic ;

This output shows that eth0 has had a link failure and the bonding module corrected the problem by bringing the eth1 slaved interface on-line to continue handling the traffic for the bond.

At this point the bond is functioning in an active-backup state as configured! While this particular guide only went through active-backup teaming, the other methods are very simple to configure as well but will require different parameters depending on which bonding method chosen. Remember though that of the six bond options available, bond mode 4 will require special configuration on the switches that the particular system connected.

Want to improve this question? Update the question so it’s on-topic for Network Engineering Stack Exchange.

Closed 2 years ago .

I’m spinning out on this one. Someone please help!

I followed this fab tutorial below to make my own Ubuntu based router:

Which works great using only two ports of the four ports available on my hardware which is:

– old lenovo desktop with an old 4 port HP Gigabit Adaptor NC364T

– interface 1 (WAN): DHCP conn to BT HomeHub router (192.168.1.?/24)

– interface 2 (LAN): static 10.1.0.1 with DHCP server setup over subnet 10.1.0.0/24

– interface 2 then connects to a new Cisco SG220-26 switch and hey presto I’m up with all my LAN devices!

However. as I have some storage in the box as well and both switch/net card support link aggregation. I thought I could create a bond in netplan and increase the bandwidth (e.g. use 1 port for WAN, 3 other for LAN aggregated link to the switch) plus its a learning exercise right.

I can’t seem to get this to work..either as a bond or adding static/dhcp interfaces to my netplan yaml

Here is the working netplan yaml for the two port version:

And the working /etc/rc.local iptables configuration:

Using the netplan example for a bonded router as a starting point, my not working bonded netplan yaml looks like:

With my not working bonded /etc/rc.local iptables configuration identical, except I have switched the LAN interface (enp3s0f1) for the bond interface (pigeon-lan):

Also, I have changed the DHCP interface in /etc/default/dhcpd.conf to hit the bond as well.

As soon as this is applied (netplan/dhcp/reboot etc.) I can’t hit the WAN/LAN via the switch at all, but can ping out from the box to google etc so the WAN looks ok.

scratching my head on this one as to where to go so any help would be appreciated!!

In addition to the internal network interface, I added a USB3.0 network controller for testing purposes, will probably be replaced by an internal dual NIC or just another single NIC. I’m running Lubuntu and can’t seem to get a network bond working correctly.

I’m trying to use link aggregation with 802.3ad so that both interfaces work at the same time. I got the information mainly from the official ubuntu wiki and from this post.

Here is the configuration (after loading bonding kernel module)

In dmesg I then see

Warning: No 802.3ad response from the link partner for any adapters in the bond

Network is still working, but only with one interface. If I check ifconfig, I see both interfaces are listed as SLAVE but only one actually has more than a few KB transferred. I assume, this is because the switch to which both interfaces are connected to, also needs to be configured correctly, makes sense.

I’ve got D-Link DGS-1100-08 switches. If I read the specifications correctly, these switches should support 802.3ad. So I configure them through L2 Features -> Link Aggregation -> Enabled and add the two ports to one of the groups. As soon as I save these settings, network doesn’t work at all anymore.

What could be the problem? Did I misunderstand something (bond-mode 4 sould be 802.3ad right? And configuring the switch as I did should enable Lubuntu to communicate with both NICs at the same time, right?)

Find out how to enable OVHcloud Link Aggregation in the OVHcloud Control Panel

Last updated 15th November 2021

Objective

OVHcloud Link Aggregation (OLA) technology is designed by our teams to increase your server’s availability, and boost the efficiency of your network connections. In just a few clicks, you can aggregate your network cards and make your network links redundant. This means that if one link goes down, traffic is automatically redirected to another available link.

Aggregation is based on IEEE 802.3ad, Link Aggregation Control Protocol (LACP) technology.

This guide explains how to configure OLA in the OVHcloud Control Panel.

Requirements

- a dedicated server in your OVHcloud account

- access to the OVHcloud Control Panel

- an Operating System / Hypervisor that supports the 802.3ad aggregation protocol (LACP)

Instructions

Configuring OLA in the OVHcloud Control Panel

To start configuring OLA, log in to the OVHcloud Control Panel and choose the Bare Metal Cloud section. Click on Dedicated Servers in the left-hand sidebar, then select your server from the list.

In the tab Network interfaces (1), click on the . button (2) to the right of “Mode” in the OLA: OVHcloud Link Aggregation box. Next, click Configure private aggregation (2).

Make sure that both your interfaces, or interface groups, are selected and give the OLA a name. Click Confirm when your checks are complete.

This may take a few minutes. Once it is complete, the next step is to configure the interfaces in your operating system via a NIC link or NIC team. For the method to use, refer to the following guides for the most popular operating systems:

Restoring OLA to default values

To restore OLA to the default values, click on the . button to the right of “Mode” in the OLA: OVHcloud Link Aggregation box. Then click Unconfigure private aggregation . Click Confirm in the popup menu.

Bonding est un pilote qui permet d’agréger plusieurs cartes réseaux de sorte à augmenter la bande passante et avoir une «haute disponibilité».

Si une interface Bond est montée avec 2 cartes réseaux à 100 Mbits/s, selon le mode utilisé le débit obtenu pourra être de 200 Mbits/s . La machine restera accessible si l’une des interfaces ne répond plus.

Descriptif

Prérequis

3 Normes peuvent être utilisées au niveau du switch pour mettre en place une interface bond :

Le serveur doit avoir :

Le module bonding.ko est installé par défaut :

Les modes

Comme cela à été précisé, en fonction du mode sélectionné l’agrégat fonctionnera de façon différente.

Mode 0 : Round Robin , équilibrage de charge

La transmission des paquets se fait de façon séquentielle sur chacune des cartes actives dans l’agrégat. Ce mode augmente la bande passante et gère la tolérance de panne.

Mode 1 : Active – passive

Ce mode ne gère que la tolérance de panne. Si une des interfaces est désactivée, une autre lien du bond prend le relais.

Mode 2 : Balance xor

Mode 3 : Broadcast

Tout le trafic est envoyé par toutes les interfaces

mode 4 : 802.3ad

Ce mode s’appuie sur la norme IEEE 802.3ad Dynamic link aggregation. Toutes les interfaces du groupe sont agrégées de façon dynamique, ce qui augmente la bande passante et gère la tolérance de panne.

Cela implique que le switch gère le 802.ad et les interfaces soient compatibles mii-tool et/ou ethtool.

mode 5 : balance-tlb

Adaptive transmit load balancing : seule la bande passante en sortie est load balancée selon la charge calculée en fonction de la vitesse, ceci pour chaque interface. Le flux entrant est affecté à l’interface courante. Si celle-ci devient inactive, une autre prend alors l’adresse MAC et devient l’interface courante.

ethernet network-manager networking server

Ubuntu 16.04 lts server with 4 nics bonded on IEEE 802.3ad Dynamic link aggregation.

Upon reboot, the network service fails to load automatically and have to do a manual start using sudo /etc/init.d/networking start

All the interfaces including the bond have an auto load in the /etc/network/interfaces

This is supposed to start the network service upon reboot, but its not. What can I do to have the network start on boot?

Edit 1

Edit 2

Output of dmesg | grep -i bond0 :

Edit 3

The link is slow due to the old Cisco Catalyst Switch in place.

Edit 4

The bond seems to be initiating before the interfaces are raised

Edit 5

Problem was solved see the answer here

Situation

Dell PowerEdge 2950 running NextCloud Server over Ubuntu 16.04 lts with unstable bonded 802.3ad dynamic link aggregation network with intermittent running timeouts and boot errors.

Troubleshooting

Past a myriad of server side configuration testing (thanks to George for the support) the intermittent network problem persisted. A compatibility issue was deduced between the builtin Broadcom and the pci Intel nics when bonded in Ubuntu 16.04 lts.

Hardware Solution

Two dual Intel pci nics were installed on the 2950 riser pci slots, nvram cleared and the builtin broadcom were disabled from bios. This was done to favor bandwidth i.e. 4 (1Gb) nics instead of the 2 (1Gb) builtin interfaces.

Server Solution

There are conflicting bonding configuration suggestions for Ubuntu 16.04 lts and this is what worked for me.

1. Ran ifconfig -a to get hold of the new interface bios and dev names

2. As I had bonding preconfigured before I ran sudo apt install –reinstall ifenslave

3. Checked if bonding is loaded at boot sudo nano /etc/modules

NOTE: I remove rtc as it is depreciated in 16.04 lts and I like a clean boot

4. Stopped networking in my case I use sudo /etc/init.d/networking stop

5. Edited the interfaces /etc/network/interfaces with the bond as follows. Note that you need to change the interfaces name with yours, including the ips

6. Reloaded the kernel bond module sudo modprobe bonding

7. Created a bonding configuration /etc/modprobe.d/bonding.conf with

8. Restarted the network, in my case I use sudo /etc/init.d/networking restart

9. Checked the bond cat /proc/net/bonding/bond0

10. Reboot to see if all holds up!

I want to setup KVM bridge with bonding on Ubuntu Linux 16.04 LTS server. I have total four Intel I350 Gigabit network connection (NICs). I would like bonding/enslaving eth0 through eth2 into one bonded interface called bond0 with 802.3ad dynamic link aggregation mode. How do I configure bridging and bonding with Ubuntu Server 16.04 LTS?

You need to set up the bridging so that KVM/XEN or LXC containers based virtual machines (guests) to show up on the same network as the host server. Bridged configuration needs bridge-utils installed. Bonded configuration need ifenslave utility. In this tutorial, you will learn how to create bonded and bridged networking on Ubuntu 16.04 LTS server.

Fig.01: Sample setup – KVM bridge with Bonding on Ubuntu LTS Server

Install ifenslave on Ubuntu

Type the following command:

$ sudo apt install ifenslave bridge-utils

Bridge with Bonding on Ubuntu

Backup your /etc/network/interfaces file, run:

$ sudo cp /etc/network/interfaces /etc/network/interfaces.bakup

Edit /etc/network/interfaces, run:

$ sudo vi /etc/network/interfaces

First create, bond0 interface config without an IP address and enslave eth0 and eth2 as follows:

auto bond0

iface bond0 inet manual

bond-lacp-rate 1

post-up ifenslave bond0 eth0 eth2

pre-down ifenslave -d bond0 eth0 eth2

bond-slaves none

bond-mode 4

bond-lacp-rate fast

bond-miimon 100

bond-downdelay 0

bond-updelay 0

bond-xmit_hash_policy 1

Next edit/update eth0 without an IP address for bond master bond0:

auto eth0

iface eth0 inet manual

bond-master bond0 auto eth2

iface eth2 inet manual

bond-master bond0

Finally, create br0 bridge and assign an IP address and other IP settings including gateway:

auto br0

iface br0 inet static

address 10.86.115.66

netmask 255.255.255.192

broadcast 10.86.115.127

gateway 10.86.115.65

# ——————————————

# Example: set dns server too

# dns-nameservers 8.8.8.8 8.8.4.4 10.86.115.1

# ——————————————

# Static route example

#up route add -net 10.0.0.0/8 gateway 10.86.115.65

#down route del -net 10.0.0.0/8

#up route add -net 161.26.0.0/16 gateway 10.86.115.65

#down route del -net 161.26.0.0/16

# ——————————————

# Want to know what the default and more info

# on the following options?

# Read brctl(8) man page

# ——————————————

bridge_ports bond0

bridge_stp off

bridge_fd 9

bridge_hello 2

bridge_maxage 12

Save and close the file. Reboot the server or restart the networking service:

$ sudo systemctl restart networking

Verify it:

$ brctl show

Sample outputs:

See bond0 status and other info:

$ more /proc/net/bonding/bond0

Sample outputs:

Ethernet Channel Bonding Driver: v3.7.1 (April 27, 2011) Bonding Mode: IEEE 802.3ad Dynamic link aggregation

Transmit Hash Policy: layer3+4 (1)

MII Status: up

MII Polling Interval (ms): 100

Up Delay (ms): 0

Down Delay (ms): 0 802.3ad info

LACP rate: fast

Min links: 0

Aggregator selection policy (ad_select): stable

System priority: 65535

System MAC address: 00:25:90:4f:b0:6c

Active Aggregator Info:

Aggregator ID: 1

Number of ports: 1

Actor Key: 9

Partner Key: 43

Partner Mac Address: b0:fa:eb:13:97:00 Slave Interface: eth0

MII Status: up

Speed: 1000 Mbps

Duplex: full

Link Failure Count: 0

Permanent HW addr: 00:25:90:4f:b0:6c

Slave queue ID: 0

Aggregator ID: 1

Actor Churn State: none

Partner Churn State: none

Actor Churned Count: 0

Partner Churned Count: 0

details actor lacp pdu:

system priority: 65535

system mac address: 00:25:90:4f:b0:6c

port key: 9

port priority: 255

port number: 1

port state: 63

details partner lacp pdu:

system priority: 32768

system mac address: b0:fa:eb:13:97:00

oper key: 43

port priority: 32768

port number: 300

port state: 61 Slave Interface: eth2

MII Status: down

Speed: Unknown

Duplex: Unknown

Link Failure Count: 0

Permanent HW addr: 00:25:90:4f:b0:6e

Slave queue ID: 0

Aggregator ID: 2

Actor Churn State: churned

Partner Churn State: churned

Actor Churned Count: 1

Partner Churned Count: 1

details actor lacp pdu:

system priority: 65535

system mac address: 00:25:90:4f:b0:6c

port key: 0

port priority: 255

port number: 2

port state: 71

details partner lacp pdu:

system priority: 65535

system mac address: 00:00:00:00:00:00

oper key: 1

port priority: 255

port number: 1

port state: 1

Практика объединения различных сетевых интерфейсов в один называется объединением сети или бондингом. Основная цель объединения — повысить производительность и ширину канала, а также обеспечить резервирование сети. Кроме того, соединение с сетью выгодно там, где решающее значение имеют допущенные неисправности, например, в соединениях с балансировкой нагрузки. Пакеты для объединения сети доступны в системе Linux. Давайте посмотрим, как настроить сетевое объединения в Ubuntu с помощью консоли. Перед тем как начать, убедитесь, что у вас есть следующее:

- Учетная запись администратора или главного пользователя

- Доступны два или более интерфейсных адаптера.

Установите модуль бондинга в Ubuntu

Сначала нам нужно установить модуль бондинга. Следовательно, войдите в систему и откройте оболочку командной строки, нажав «Ctrl+Alt+T». Убедитесь, что в вашей системе Linux настроен и включен модуль бондинга. Чтобы загрузить модуль, введите следующую команду, за которой следует пароль пользователя.

Связь была включена согласно следующему запросу:

Если в вашей системе отсутствует данный модуль, обязательно установите пакет ifenslave в вашу систему с помощью apt:

Вы можете видеть, что система успешно установила и включила модуль в вашей системе в соответствии с последними строками ниже.

Временное сетевое соединение

Временное соединение действует только до следующей перезагрузки. Это означает, что при перезагрузке оно исчезает. Приступим к временному объединению. Прежде всего, нам нужно проверить, сколько интерфейсов доступно в нашей системе. Выходные данные ниже показывают, что в системе доступны два интерфейса Ethernet enp0s3 и enp0s8.

Прежде всего, вам необходимо изменить состояние обоих интерфейсов Ethernet, используя следующие команды:

Теперь вам нужно создать сеть связи на главном узле bond0 с помощью команды ip link, как показано ниже. Обязательно используйте режим связывания как «802.3ad».

После создания связи сети связи добавьте оба интерфейса к главному узлу, как показано ниже.

Вы можете подтвердить создание сетевого соединения, используя запрос ниже.

Постоянное соединение с сетью

Если кто-то хочет создать постоянное сетевое соединение, он должен внести изменения в файл конфигурации сетевых интерфейсов. Следовательно, откройте файл в редакторе nano, как показано ниже.

Теперь обновите файл со следующей конфигурацией. Не забудьте добавить bond_mode как 4 или 0. Сохраните файл и выйдите из него.

Чтобы включить сетевое соединение, нам нужно изменить состояния обоих подчиненных интерфейсов и изменить состояние главного узла, используя следующий запрос.

Теперь перезапустите сетевую службу, используя приведенную ниже команду systemctl.

Вы также можете использовать приведенную ниже команду вместо указанной выше.

Теперь вы можете проверить, включен ли главный интерфейс, используя следующий запрос:

Вы можете проверить статус вновь созданной сетевой связи, которая была успешно создана, используя приведенный ниже запрос.

Добавить комментарий Отменить ответ

Для отправки комментария вам необходимо авторизоваться.

Configuration

One central question from the admin’s point of view is how the bonding or teaming solution can be configured and how practical administration works in everyday life. Earlier, you already saw that bonding.ko in particular is deeply integrated into the kernel and that support for bonding is therefore part of the standard scope of delivery in current distributions.

However, this does not mean that the configuration is automatically intuitive, simple, or easy to understand. Anyone who has ever locked themselves out of a newly installed CentOS system by putting a wrong bonding configuration into operation, which could only be corrected through the BMC interface afterward, will be familiar with the problem.

As with the architecture, libteam and bonding are fundamentally different when it comes to configuration and handling. On the one hand, the kernel bonding driver has to be processed in userland by ifenslave . The configuration in CentOS and related distros is generally not much more intuitive than this tool, and not many admins will be able to edit the necessary configuration files to achieve a working bonding configuration at the end of the day without referring to the documentation.

Ubuntu, Debian, and SLES are not much smarter. Admins can get into a terrible mess if they don’t want to operate the bonding modules with the standard parameters but attempt to define custom values (e.g., for the MII link monitoring frequency). In the worst case, only a modinfo against the bonding module will help you find the required parameter – combined with the system documentation to find out where to write the parameter for the bonding driver to actually use it.

All told, the user experience for configuring bonding in Linux is about the same as the standard 20 years ago, which is mainly because it was created about 20 years ago.

JSON Configuration

The Team daemon works in a completely different way. The administrator stores the configuration for the service in the form of a JSON file in the /etc folder, although the path can change depending on the distribution. In the teamd.conf file, you then add everything that teamd needs to know to create the team devices after it starts up.

Parameters desired by the user, such as the Team mode or the ARP monitoring mode, can be defined in teamd.conf for each device. A nice man page ( man 5 teamd.conf ) for the file explains all relevant parameters in detail, including the respective default values (Figure 5).

If you configure your team devices in this way, it’s almost boring: Gone are the days when restarting the network configuration was associated with the inherent risk of locking yourself out of the system. Team daemon configuration in JSON format is well structured, equipped with understandable keywords, and excellently documented. Again, a point clearly goes to libteam and teamd , putting the score at 2 to 1.

Basic Functions